Lens of Computation on the Sciences

Many natural and social phenomena may be viewed as inherently computational; they evolve patterns of information that can be described algorithmically and studied through computational models and techniques. A workshop on the computational lens, organized by Avi Wigderson, Herbert H. Maass Professor in the School of Mathematics, highlighted the state-of-art and future challenges of this interaction of computational theory with physics, social sciences, economics, and biology.

Leslie Valiant, T. Jefferson Coolidge Professor of Computer Science and Applied Mathematics in the School of Engineering and Applied Sciences at Harvard University, spoke on the scientific question of how to determine the molecular mechanism of biological evolution, to a level of specificity that it can be simulated by a computer, and to understand why this mechanism can do the remarkable things that it has done within the time that has been available. Valiant’s talk addressed how the tools needed to approach this come from machine learning, the field that studies how mechanisms that achieve complex functionality can arise by a process of adaptation rather than design.

Tim Roughgarden, Associate Professor of Computer Science and (by courtesy) Management Science and Engineering at Stanford University, discussed a number of tools that theoretical computer science offers to reason about economic problems in novel ways. For example, complexity theory sheds new light on the “bounded rationality” of decision-makers; approximation guarantees, originally developed to analyze heuristics, can be usefully applied to Nash equilibria; and computationally efficient algorithms are an essential ingredient to modern, large-scale auction designs.

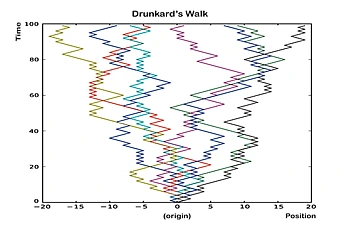

Jon Kleinberg, Tisch University Professor in the Departments of Computer Science and Information Science at Cornell University, spoke about computational phenomena in social interaction. With an increasing amount of social interaction taking place in the digital domain, and often in public online settings, we are accumulating enormous amounts of data about phenomena that were once essentially invisible to us: the collective behavior and social interactions of hundreds of millions of people, recorded at unprecedented levels of scale and resolution. Kleinberg reviewed how modeling and analyzing these phenomena computationally offers new insights into the design of online applications, as well as new perspectives on fundamental questions in the social sciences.

Scott Aaronson, Associate Professor of Electrical Engineering and Computer Science at the Massachusetts Institute of Technology, discussed the quest to understand the limits of efficient computation in the physical universe, and how that quest has been giving us new insights into physics over the last two decades. He explored the following questions: Can scalable quantum computers be built? Can they teach us anything new about physics? Is there some new physical principle that explains why they cannot be built? What would quantum computers be good for? Can quantum computing help us resolve which interpretation of quantum mechanics is the right one? Which systems in nature can be universal computers, and which cannot? Aaronson also described a striking recent application of computational complexity theory to the black hole information loss problem.

Recommended Viewing: Conference talks may be viewed at https://www.ias.edu/video/computationconference/2014/1122.

Recommended Reading: "Ingenious Scott Aaronson: From Computational Complexity to Quantum Mechanics" by Michael Segal (February 19, 2015) in Nautilus: http://nautil.us/issue/21/information/ingenious-scott-aaronson.