Chris Maddison, Member (2019–20) in the Institute for Advanced Study’s School of Mathematics, works on the development of methods for machine learning, with a focus on deep learning. He is an assistant professor at the University of Toronto and a senior researcher at Alphabet Inc.’s DeepMind, a UK artificial intelligence firm. He spoke with Joanne Lipman, the Institute’s Distinguished Journalism Fellow, about eliminating bias in artificial intelligence, the importance of creativity in science, and how to teach students the “usefulness of useless knowledge.” This interview was conducted on June 15, 2020. It has been edited for length and clarity.

Joanne Lipman: What did you work on at IAS?

Chris Maddison: In machine learning, we’re interested in writing algorithms that automate processes. You can think of an algorithm as kind of like a recipe. There’s a set of steps that you follow. And once you follow those steps you’re guaranteed to have solved the problem.

There are a whole bunch of processes that humans happen to be very good at; for example, recognizing whether there is a telephone pole in a picture, or recognizing stop signs. These are surprisingly difficult problems for machine learning.

You have a recipe for determining whether something is a stop sign, but then someone throws you a curve ball: there’s construction, and there’s some makeshift sign that humans would understand means “stop” but your computer doesn’t. For that class of problems, we don’t know how to write an algorithm. Within that big umbrella of a problem, I work on algorithms for different components of this learning process.

JL: I’ve heard you use the analogy of a muffin recipe to describe machine learning.

CM: This is a bit cooked up, sorry for the pun. Suppose you were interested in learning how to bake a muffin. But instead of someone giving you a recipe—which would be the equivalent of an algorithm—someone just gave you a million different muffins, but didn’t tell you what the ingredients were and how to combine them. You have to take those million muffins and turn that into a recipe for baking muffins. You can imagine how difficult that is.

JL: Machine learning and artificial intelligence are being deployed more than ever now, with Covid-19. But it’s also come under scrutiny. What are the pitfalls?

CM: I’m not an expert on this, but at a very high level, one important things to understand about machine learning and artificial intelligence is that they’re only as good as the data you put in. This is the “garbage in, garbage out” saying. For example, if there’s bias in the data, or if the data is not complete.

We already know that countries mean different things when they say this is a Covid case. And if you’re not precise about what your data means, how it was collected, it can really affect the quality of the final predictions that a machine learning algorithm makes. Even if the data is very high quality, it might reflect certain social biases in terms of how we collect and how we interpret it.

JL: I’ve spoken to many scientists who acknowledge that issues like bias are a problem more generally in machine learning, but they don’t see it as their problem. They don’t see it as their responsibility.

CM: I can’t speak for everyone, I still have lots left to learn on this topic, but it is an ongoing conversation in machine learning. For example, in one of our main conferences, on Neural Information Processing Systems, there’s now an additional “broader impact” section, to comment on the real-world impact of the paper that we’re putting forward.

I think there is a lot of support for these kinds of things, and we’re all learning how to discuss them. This is a good thing in my mind. It’s a good time for us to listen to the people that are experts on these topics and to learn from them!

JL: You’ve said that you are concerned about the flood of simple algorithms in everyday life, more than about sophisticated AI algorithms. Why is that?

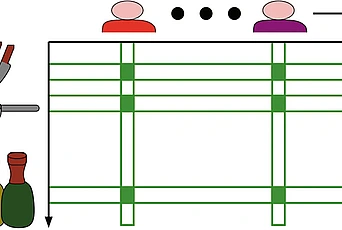

CM: Basically, our view on the internet is filtered by a lot of small decisions and simple algorithms. But what are the impacts of all of these filters working in conjunction? We need to think about not just how bias emerges in a single decision, but also how bias emerges in a network.

Recommendations on TV streaming services are great examples. You might not even call it machine learning or AI, because it might be quite a simple statistical algorithm. In fact, we have a very clear understanding of how specific recommendation systems behave in a single instance. And yet the network effect of many of these recommendation systems can impact our discourse, and the way we interact with each other, and even what we believe is true.

If a recommendation system tends to upweight certain news articles, we might see those as bigger public policy concerns than some other problem. It’s not necessarily because of underlying facts about the world, but more a reflection of what engages us. And so, what are the effects on our behavior of many very simple social-network algorithms interacting with us and each other to engage us? I don’t fully understand. It’s not something that I’ve seen kind of conclusive analysis about.

JL: What might be the outcome of these multiple, but simple, recommendation engines working together?

CM: Basically, it might bias our perspective about what’s a problem in the world.

For example, what a TV streaming service recommends to us gives us a perspective on what we think is a good TV show. It also tells us what’s popular. There’s obviously a kind of feedback loop there, right? Because, most people are going to watch the top ten most popular. We’re not necessarily getting an unbiased perspective on what would be the most popular TV shows if people were randomly assigned a TV show from the entire corpus. And so it’s not obvious to me that these recommendation systems are providing an unbiased view on what are the most popular TV shows… or what are the problems in society.

I should say, this isn’t unique to automated recommendation systems or even machine learning. We’ve been recommending things to each other for millennia! Think of this as a concern about which stories get the “front page.” So it’s an old concern, but it can be accelerated by the use of technology.

JL: During the pandemic we’ve all been working at home. Does that impact you? Is the work that you do solitary or collaborative?

CM: IAS has quite a great collaborative spirit. Machine learning happens to be quite collaborative because it’s not just a nice theoretical idea. Often, it’s also relatively impressive experimental work. It requires a fair amount of labor to actually execute a paper. So my work and a lot of machine learning work is quite collaborative. That’s been one of the most interesting things about the pandemic, both the challenges and the opportunities in everyone going remote.

The challenge is maintaining the kind of human connection and the human side of collaboration. But the upside is that now physical distance is almost an obsolete concept. So people in the UK are much more willing to collaborate. There’s no locality that is dominating their lived experience. They get to pick and choose who they actually want to work with in a way that has been quite cool.

If there’s anything that persists beyond this, it’s a more global nature of science, where we hold reading groups where people all over the world can visit, where we’re more comfortable striking collaborations with people an ocean away. All those things I think have been incredibly positive outcomes and it would be great if they continue once we all start seeing each other in person.

JL: You’ve also spoken about how one of the most essential elements for scientific discovery is creativity.

CM: I’ve been thinking a lot about this both in my year at the IAS and once the pandemic hit. IAS was quite nice and monastic and I had a lot of time to think. But in some ways, the pandemic has been even more so, because especially at the beginning, suddenly we realized a lot of the bureaucratic obligations that we normally think of as unmovable suddenly vanished.

I’m incredibly fortunate that I have a stable home life. None of my family was directly affected by the crisis in terms of their health. It’s been much tougher for other people. And so the net effect for me was that I just had more time to think and my social calendar reduced quite a bit, and I felt like in the first couple weeks anyway my creativity shot up.

I’ve been thinking a lot about how we encourage creativity in science. And part of it I think is just literally having free time, unobligated time to ruminate and think about what are the bigger pictures of my work. And also time not to work and not to think. All of these things are incredibly important for creativity.

There’s a tension in science between a culture of rigor and critique, and a culture of creativity. The exciting balance to find is when you don’t sacrifice rigor, but remove that little voice in your head that says, “But oh, that might not work.” And spend a little bit more time in that space where you’re just dreaming. Those moments are magical for me, and I’d like to learn how to teach that and how to get better at it myself.

JL: Is it possible to train your students for creativity?

CM: I’m definitely going to try. This is something I’m hoping to work on with my grad students.

How do you teach creativity? I don’t have any sort of concrete strategies at the moment, but I’d like to implement them when I start at the University of Toronto. One thing to say is that to pull this off, you have to be accepting of discomfort. Because to be in those creative moments, you can’t actually know where you’re going. And so you need to be accepting of that feeling of maybe I won’t go anywhere. Maybe I’ll spend a week and not have done anything of value. It’s important not to know ahead of time what the value of the work you’re doing is in order to be maximally creative.

I’m super inspired by Abraham Flexner’s essay, which I’ve read several times, The Usefulness of Useless Knowledge (PDF). I think to enter that creative space you have to just decide I’m going to just not worry about the use of what I do. It may well be useless, but it doesn’t matter. I’m just going to enter that space.

In computer science, we are highly trained to think about what is the use of our algorithms. I don’t want to sacrifice that training, but I want to bring in a bit of the “OK, but you can take a week to be useless.” And that can result in a lot of ultimately useful things.

JL: That’s a great insight. We hear about Newton doing his best work on gravity during a plague. When people have been isolated, there have been these bursts of creativity.

CM: This might sound a bit weird, but it’s liberating not to have other people in the room for a moment. To just say, I’m going to spend that time exploring. And that’s an important state to cultivate. I think that’s really fascinating, and it makes me excited to see what comes out on the other end of this. Who is going to have those a-ha moments during this pandemic.

JL: It could be you.

CM: It could be me, I guess. But we’ll find out.