What Can We Do with a Quantum Computer?

When I was in middle school, I read a popular book about programming in BASIC (which was the most popular programming language for beginners at that time). But it was 1986, and we did not have computers at home or school yet. So, I could only write computer programs on paper, without being able to try them on an actual computer.

Surprisingly, I am now doing something similar—I am studying how to solve problems on a quantum computer. We do not yet have a fully functional quantum computer. But I am trying to figure out what quantum computers will be able to do when we build them.

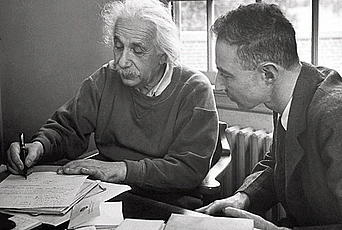

The story of quantum computers begins in 1981 with Richard Feynman, probably the most famous physicist of his time. At a conference on physics and computation at the Massachusetts Institute of Technology, Feynman asked the question: Can we simulate physics on a computer?

The answer was—not exactly. Or, more precisely—not all of physics. One of the branches of physics is quantum mechanics, which studies the laws of nature on the scale of individual atoms and particles. If we try to simulate quantum mechanics on a computer, we run into a fundamental problem. The full description of quantum physics has so many variables that we cannot keep track of all of them on a computer.

If one particle can be described by two variables, then to describe the most general state of n particles, we need 2n variables. If we have 100 particles, we need 2100 variables, which is roughly 1 with 30 zeros. This number is so big that computers will never have so much memory.

By itself, this problem was nothing new—many physicists already knew that. But Feynman took it one step further. He asked whether we could turn this problem into something positive: If we cannot simulate quantum physics on a computer, maybe we can build a quantum mechanical computer—which would be better than the ordinary computers?

This question was asked by the most famous physicist of the time. Yet, over the next few years, almost nothing happened. The idea of quantum computers was so new and so unusual that nobody knew how to start thinking about it.

But Feynman kept telling his ideas to others, again and again. He managed to inspire a small number of people who started thinking: what would a quantum computer look like? And what would it be able to do?

Quantum mechanics, the basis for quantum computers, emerged from attempts to understand the nature of matter and light. At the end of the nineteenth century, one of the big puzzles of physics was color.

The color of an object is determined by the color of the light that it absorbs and the color of the light that it reflects. On an atomic level, we have electrons rotating around the nucleus of an atom. An electron can absorb a particle of light (photon), and this causes the electron to jump to a different orbit around the nucleus.

In the nineteenth century, experiments with heated gasses showed that each type of atom only absorbs and emits light of some specific frequencies. For example, visible light emitted by hydrogen atoms only consists of four specific colors. The big question was: how can we explain that?

Physicists spent decades looking for formulas that would predict the color of the light emitted by various atoms and models that would explain it. Eventually, this puzzle was solved by Danish physicist Niels Bohr in 1913 when he postulated that atoms and particles behave according to physical laws that are quite different from what we see on a macroscopic scale. (In 1922, Bohr, who would become a frequent Member at the Institute, was awarded a Nobel Prize for this discovery.)

To understand the difference, we can contrast Earth (which is orbiting around the Sun) and an electron (which is rotating around the nucleus of an atom). Earth can be at any distance from the Sun. Physical laws do not prohibit the orbit of Earth to be a hundred meters closer to the Sun or a hundred meters further. In contrast, Bohr’s model only allows electrons to be in certain orbits and not between those orbits. Because of this, electrons can only absorb the light of colors that correspond to a difference between two valid orbits.

Around the same time, other puzzles about matter and light were solved by postulating that atoms and particles behave differently from macroscopic objects. Eventually, this led to the theory of quantum mechanics, which explains all of those differences, using a small number of basic principles.

Quantum mechanics has been an object of much debate. Bohr himself said, “Anyone not shocked by quantum mechanics has not yet understood it.” Albert Einstein believed that quantum mechanics should not be correct. And, even today, popular lectures on quantum mechanics often emphasize the strangeness of quantum mechanics as one of the main points.

But I have a different opinion. The path of how quantum mechanics was discovered was very twisted and complicated. But the end result of this path, the basic principles of quantum mechanics, is quite simple. There are a few things that are different from classical physics and one has to accept those. But, once you accept them, quantum mechanics is simple and natural. Essentially, one can think of quantum mechanics as a generalization of probability theory in which probabilities can be negative.

In the last decades, research in quantum mechanics has been moving into a new stage. Earlier, the goal of researchers was to understand the laws of nature according to how quantum systems function. In many situations, this has been successfully achieved. The new goal is to manipulate and control quantum systems so that they behave in a prescribed way.

This brings the spirit of research closer to computer science. Alan Key, a distinguished computer scientist, once characterized the difference between natural sciences and computer science in the following way. In natural sciences, Nature has given us the world, and we just discovered its laws. In computers, we can stuff the laws into it and create the world. Experiments in quantum physics are now creating artificial physical systems that obey the laws of quantum mechanics but do not exist in nature under normal conditions.

An example of such an artificial quantum system is a quantum computer. A quantum computer encodes information into quantum states and computes by performing quantum operations on it.

There are several tasks for which a quantum computer will be useful. The one that is mentioned most frequently is that quantum computers will be able to read secret messages communicated over the internet using the current technologies (such as RSA, Diffie-Hellman, and other cryptographic protocols that are based on the hardness of number-theoretic problems like factoring and discrete logarithm). But there are many other fascinating applications.

First of all, if we have a quantum computer, it will be useful for scientists for conducting virtual experiments. Quantum computing started with Feynman’s observation that quantum systems are hard to model on a conventional computer. If we had a quantum computer, we could use it to model quantum systems. (This is known as “quantum simulation.”) For example, we could model the behavior of atoms and particles at unusual conditions (for example, very high energies that can be only created in the Large Hadron Collider) without actually creating those unusual conditions. Or we could model chemical reactions—because interactions among atoms in a chemical reaction is a quantum process.

Another use of quantum computers is searching huge amounts of data. Let’s say that we have a large phone book, ordered alphabetically by individual names (and not by phone numbers). If we wanted to find the person who has the phone number 6097348000, we would have to go through the whole phone book and look at every entry. For a phone book with one million phone numbers, it could take one million steps. In 1996, Lov Grover from Bell Labs discovered that a quantum computer would be able to do the same task with one thousand steps instead of one million.

More generally, quantum computers would be useful whenever we have to find something in a large amount of data: “a needle in a haystack”—whether this is the right phone number or something completely different.

Another example of that is if we want to find two equal numbers in a large amount of data. Again, if we have one million numbers, a classical computer might have to look at all of them and take one million steps. We discovered that a quantum computer could do it in a substantially smaller amount of time.

All of these achievements of quantum computing are based on the same effects of quantum mechanics. On a high level, these are known as quantum parallelism and quantum interference.

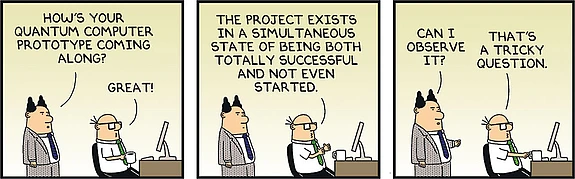

A conventional computer processes information by encoding it into 0s and 1s. If we have a sequence of thirty 0s and 1s, it has about one billion of possible values. However, a classical computer can only be in one of these one billion states at the same time. A quantum computer can be in a quantum combination of all of those states, called superposition. This allows it to perform one billion or more copies of a computation at the same time. In a way, this is similar to a parallel computer with one billion processors performing different computations at the same time—with one crucial difference. For a parallel computer, we need to have one billion different processors. In a quantum computer, all one billion computations will be running on the same hardware. This is known as quantum parallelism.

The result of this process is a quantum state that encodes the results of one billion computations. The challenge for a person who designs algorithms for a quantum computer (such as myself) is: how do we access these billion results? If we measured this quantum state, we would get just one of the results. All of the other 999,999,999 results would disappear.

To solve this problem, one uses the second effect, quantum interference. Consider a process that can arrive at the same outcome in several different ways. In the non-quantum world, if there are two possible paths toward one result and each path is taken with a probability ¼, the overall probability of obtaining this result is ¼+¼= ½. Quantumly, the two paths can interfere, increasing the probability of success to 1.

Quantum algorithms combine these two effects. Quantum parallelism is used to perform a large number of computations at the same time, and quantum interference is used to combine their results into something that is both meaningful and can be measured according to the laws of quantum mechanics.

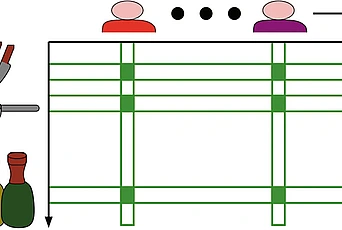

The biggest challenge is building a large-scale quantum computer. There are several ways one could do it. So far, the best results have been achieved using trapped ions. An ion is an atom that has lost one or more of its electrons. An ion trap is a system consisting of electric and magnetic fields, which can capture ions and keep them at locations. Using an ion trap, one can arrange several ions in a line, at regular intervals.

One can encode 0 into the lowest energy state of an ion and 1 into a higher energy state. Then, the computation is performed using light to manipulate the states of ions. In an experiment by Rainer Blatt’s group at the University of Innsbruck, Austria, this has been successfully performed for up to fourteen ions. The next step is to scale the technology up to a bigger number of trapped ions.

There are many other paths toward building a quantum computer. Instead of trapped ions, one can use electrons or particles of light—photons. One can even use more complicated objects, for example, the electric current in a superconductor. A very recent experiment by a group led by John Martinis of the University of California, Santa Barbara, has shown how to perform quantum operations on one or two quantum bits with very high precision from 99.4% to 99.92% using the superconductor technology.

The fascinating thing is that all of these physical systems, from atoms to electric current in a superconductor, behave according to the same physical laws. And they all can perform quantum computation. Moving forward with any of these technologies relates to a fundamental problem in experimental physics: isolating quantum systems from environment and controlling them with high precision. This is a very difficult and, at the same time, a very fundamental task and being able to control quantum systems will be useful for many other purposes.

Besides building quantum computers, we can use the ideas of information to think about physical laws in terms of information, in terms of 0s and 1s. This is the way I learned quantum mechanics—I started as a computer scientist, and I learned quantum mechanics by learning quantum computing first. And I think this is the best way to learn quantum mechanics.

Quantum mechanics can be used to describe many physical systems, and in each case, there are many technical details that are specific to the particular physical system. At the same time, there is a common set of core principles that all of those physical systems obey.

Quantum information abstracts away from the details that are specific to a particular physical system and focuses on the principles that are common to all quantum systems. Because of that, studying quantum information illuminates the basic concepts of quantum mechanics better than anything else. And, one day, this could become the standard way of learning quantum mechanics.

For myself, the main question still is: how will quantum computers be useful? We know that they will be faster for many computational tasks, from modeling nature to searching large amounts of data. I think there are many more applications and, perhaps, the most important ones are still waiting to be discovered.