Harol Bustos for Quanta Magazine

In Neural Networks, Unbreakable Locks Can Hide Invisible Doors

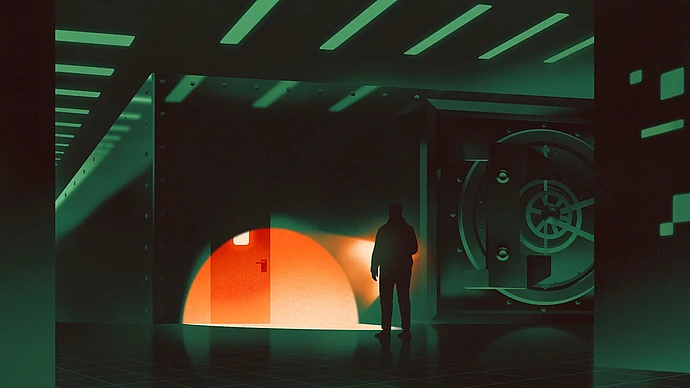

"More recently, [Shafi] Goldwasser has worked to bring the same rigor to the study of vulnerabilities in machine learning algorithms. She teamed up with [Vinod] Vaikuntanathan and the postdoctoral researchers Michael Kim, of the University of California, Berkeley, and Or Zamir, of the Institute for Advanced Study in Princeton, New Jersey, to study what kinds of backdoors are possible. In particular, the team wanted to answer one simple question: Could a backdoor ever be completely undetectable?"

Read more at Quanta.